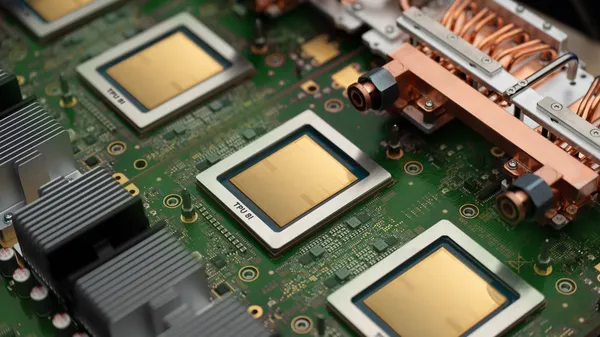

Google’s been cranking out TPUs for a while now, and the eighth generation is finally here. But instead of a single chip that tries to do everything, they’ve split the workload into two specialized variants. That’s a smart move, honestly.

The first chip is built for inference and reasoning. Think of it as the “thinker” — it handles the heavy lifting when an AI model actually processes a query, generates text, or makes a decision. The second chip is a training beast, optimized for the compute-heavy backpropagation and gradient updates that teach models from scratch.

This split makes more sense than it might sound. In the agentic era — where AI doesn’t just answer questions but takes actions, plans steps, and interacts with tools — the demands on hardware are wildly different. Training a massive model requires raw floating-point throughput and memory bandwidth. Running that model in production requires low latency and efficient batching. Trying to optimize one chip for both ends up compromising on both.

Google’s not the first to go this route. Nvidia’s been selling H100s and B200s that lean toward training, while their L40S and Grace Hopper superchips target inference. But Google’s advantage is vertical integration: they control the whole stack, from the chip design to the compiler (XLA) to the framework (TensorFlow/JAX) to the cloud infrastructure. That means they can squeeze out efficiencies that third-party chip buyers can’t.

I’m curious about the raw numbers. Google hasn’t published exact teraflops or memory specs yet, which is typical for their TPU launches — they tend to drip-feed details. But if the performance gains track with previous generations (each one has been roughly 2x-3x better than the last), we’re looking at a serious jump. The previous TPU v5p already hit some impressive benchmarks for large language models.

What I really want to see is how these chips handle agentic workflows specifically. Agents don’t just run a single forward pass — they loop, call tools, manage context windows, and sometimes need to re-evaluate. That’s a different profile from a standard chatbot inference. If the reasoning chip can handle that kind of branching without latency spikes, it’ll be a big deal.

Of course, there’s the usual caveat: you can’t buy these chips off the shelf. They’re exclusive to Google Cloud, so you’re renting access by the hour. That’s fine for enterprises already on GCP, but it locks out smaller teams or anyone who prefers on-prem or AWS. Nvidia still dominates there, and I don’t see that changing soon.

Still, it’s good to see specialization happening. The one-size-fits-all accelerator era is ending, and that’s going to push the whole industry forward. Google’s betting that agents are the next big workload, and they’re building hardware to match. I’m skeptical about the “agentic era” hype — we’ve been hearing this for years — but if anyone can make it work, it’s a company that controls both the silicon and the software.

Comments (0)

Login Log in to comment.

Be the first to comment!